Is AI replacing software developers on a meaningful scale?

The Silicon Valley companies pouring billions of dollars into data centers are ready to sell the idea that every job is on the verge of being replaced by AI. This concept is especially prevalent in software development, where the nature of the job makes AI tools especially capable of completing certain tasks. But how capable are these tools in real codebases? And how many developers are actually being laid off due of advances in AI tech?

Is AI good at writing code?

The current trend in AI is "agents". Agents differ from chatbots like ChatGPT by the fact that they are given "tools" by which they can request the text content of webpages or files, send messages, and run commands. In theory this makes agents capable of completing any task a human can do.

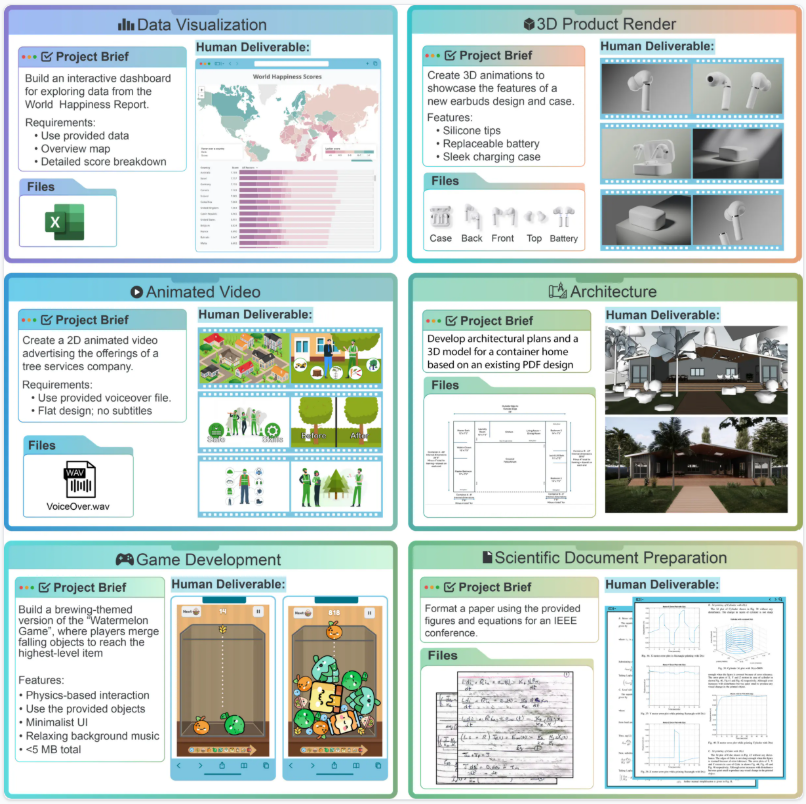

For assessing the capabilities of AI agents in real world tasks, the Remote Labor Index was created. Consisting of 240 real tasks given to online freelancers, the RLI tests AI performance across several domains, including software development. The results show that “frontier AI agents perform near the floor on RLI, achieving an automation rate of less than 3%, revealing a stark gap between progress on computer use evaluations and the ability to perform real and economically valuable work” (Mazeika et al., 2025, p. 12). A year since the publication of the paper, new models have acheived an automation rate of 4%, indicating that gains in this benchmark are hard to come by. These results lend credence to the idea that AI tools should not be left to complete tasks unattended, as doing so is highly unlikely to produce inadequate results.

Another potential vector for reductions in the job market is the potential for increased productivity by adopting AI coding tools, resulting in a smaller need for developers. This was examined by Becker et. al. in “Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity". Their research shows that experienced developers were 19% slower on average when using AI, likely attributable to a falsely perceived increase in developer productivity, as “on average, they forecast speedup of 24%” and “after the experiment they post-hoc estimate that they were sped-up by 20% when using AI is allowed” (Becker et. al., 2025, p. 8). This research reveals that AI may act as a trap, deceiving users that conversing with the tool and waiting for an output was an effective use of their time.

What are the labor market trends in software development?

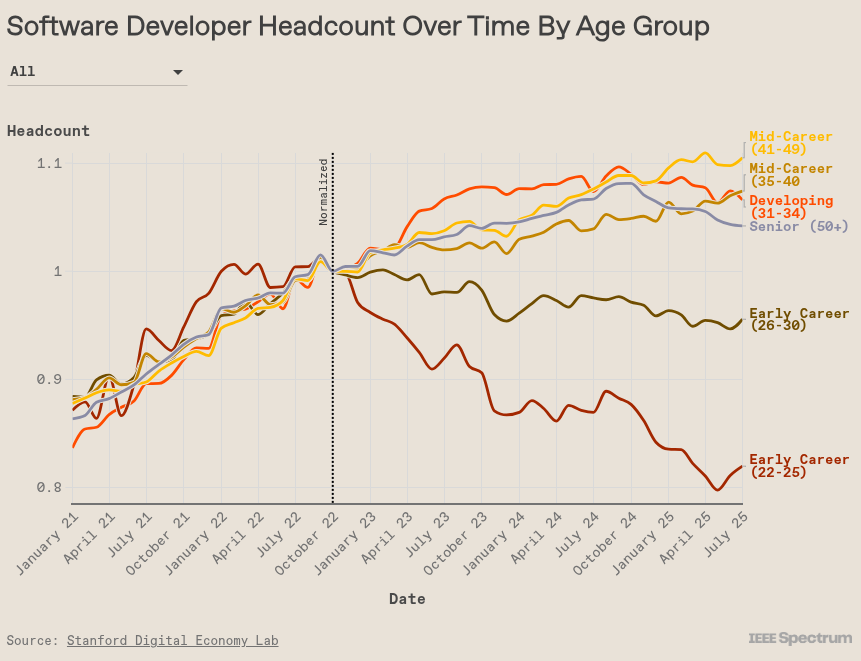

Despite the RLI describing the capabilities of AI as lacking, some programming jobs may be getting displaced, as “generative AI tools are getting much better at doing some of the same tasks typically associated with early-career workers” (Rak, 2025, para. 4). The result is that the job market is preferring more senior developers as “Since late 2022, employment for early-career software developers has dropped. Employment for other age groups, however, has seen modest growth” (Rak, 2025, para. 8).

Another important factor may be the current economic situation. When companies have to control spending, they may be less inclined to train new employees, especially in a field as complex as software development. Gwendolyn Rak, the author of an IEEE Spectrum article on the Stanford Digital Economy Lab research on the effects of AI on the job market by age group includes a statement from one of the authors of the research: “Chandar cautions that, for specific occupations, the trend may not be driven by AI alone; other changes in the tech industry could also be causing the drop” (Rak, 2025, para. 11). I believe some of these factors may include a reduced confidence investors have in other types of tech companies, causing a reduction in available funding. Some companies are also increasing their rate of employee turnover, announcing large lay-offs and rehiring people in many of the same positions.

What will be the results?

By redirecting work usually done by junior developers to AI, and by requiring more and more code to be delivered by senior developers, companies are requiring the existance of more AI generated code. This creates a nightmare scenario for the people responsible for verifying that the code is correct and secure. In a New York Times article by Mike Isaac and Erin Griffith, Joni Klippert, co-founder and the chief executive of StackHawk revealed the reality facing many software companies, stating "The sheer amount of code being delivered, and the increase in vulnerabilities, is something they can’t keep up with". The authors added that "with anyone — not just engineers — able to spin up software ideas in a matter of hours, companies are trying to figure out how to deal with the glut." (Isaac, Griffith, 2026, para. 5).

This sentiment is echoed by journalist and market analyst Ed Zitron in his blog: "To be clear, LLMs can absolutely write code, and can absolutely create software, but neither of those mean that the code is good, stable or secure, … It’s unclear what the actual economic or productivity effects are, other than an abundance of new code that’s making running companies harder." (Zitron, 2026). The current trajectory, which is common in software companies, is unsustainable, and will require a return to more sensible hiring an training practices.

How correct were previous predictions?

Back in 2024, when AI text generation was still rapidly evolving, and agents were in their infancy, researchers from the University of Oulu in Finalnd theorized pathways AI automation could take in the software development field. They developed four scenarios of AI adoption, ranging from limited assistance to human workers to full automation with limited human oversight. Their predictions were largely correct and hold to this day: "Moving from a human-centric model such as S1 or human-dominant S2 directly to a near-fully automated model such as S4 might lead to overlooked nuances and a lack of creativity. Although AI can automate many tasks, a sudden loss of human insight, oversight, and intuition can lead to unanticipated problems, loss of architectural control, and reduced quality" (Sauvola, et. al., 2024, p. 5).

Conclusion

I find the results of the Becker et. al. paper very illuminating, as they are concistent with my experience with AI for coding. I have had times when I would ask AI to write some code and it would not work. I would then describe the issue and wait for an output again. The broken AI code would also not be easy for me to fix, since I was not the author, and had to familiarize myself with it. While newer AI models make less mistakes, they still don't think, resulting in unstructured and poorly readable code.

The infinite growth mindset of the tech industry is pushing employees to deliver exponentially more code, necessitating the use of AI. The poorly written code is creating "techincal debt", and when security vulnerabilies are found, they will be harder to fix. All of this code will have to be rewritten eventually, and skilled software developers will be at even more of a premium. This shortage might also force a return to more sustainable hiring and training practices, inviting more opportunities for early career developers.

The sources are available here.